Introduction

In the rapidly evolving landscape of artificial intelligence, mastering prompt engineering has become an essential skill for developers and AI practitioners alike. As large language models (LLMs) like GPT-4 continue to demonstrate impressive capabilities, the way we craft prompts directly influences the quality, accuracy, and usefulness of their outputs. Whether you’re building chatbots, automating content generation, or designing complex AI workflows, understanding prompt engineering is crucial to harnessing the full potential of these models.

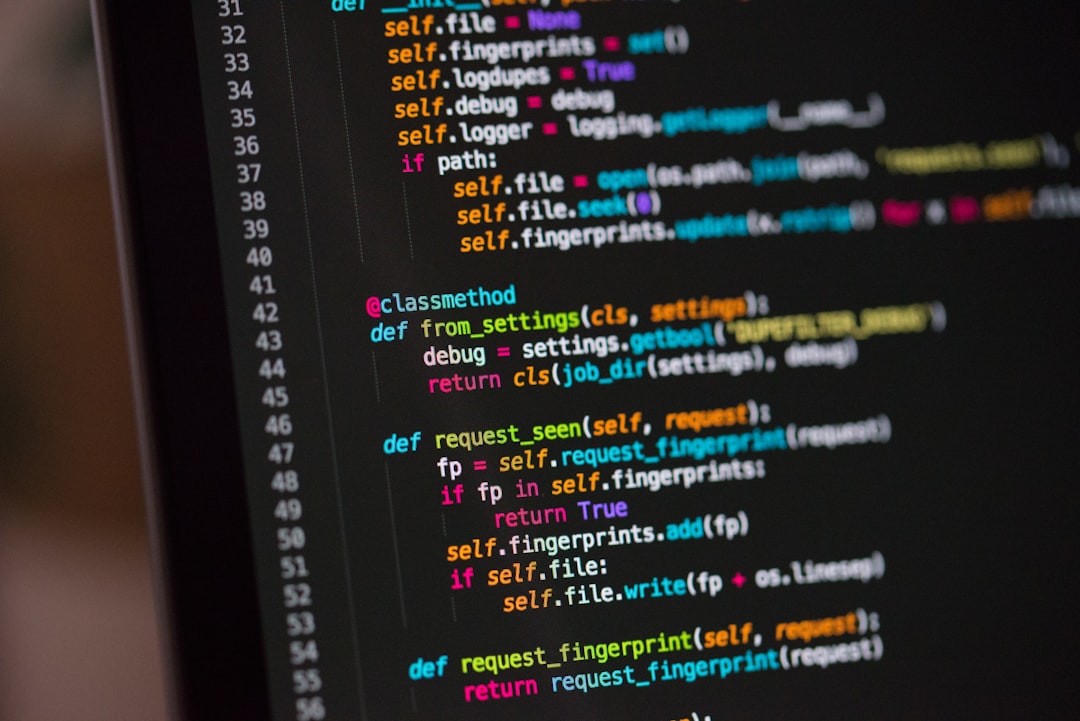

Prompt engineering involves designing, refining, and optimizing inputs—called prompts—to steer AI models toward desired results. Unlike traditional programming, where code logic dictates outcomes, prompt engineering relies on crafting natural language instructions that the AI interprets and executes. This discipline blends creativity with technical precision, requiring a nuanced understanding of language, context, and model behavior.

For developers, mastering prompt engineering unlocks numerous benefits: improved model reliability, reduced ambiguity, enhanced safety, and more efficient workflows. It’s a foundational skill that enables you to develop robust AI applications, troubleshoot issues effectively, and push the boundaries of what generative AI can achieve.

In this comprehensive guide, we will explore prompt engineering examples, common patterns, typical mistakes to avoid, and best practices for creating powerful prompts. Whether you are a beginner or an experienced developer, understanding these principles will elevate your AI projects and help you craft prompts that deliver consistent, high-quality results.

Main Body of the Topic

Developers worldwide are increasingly focusing on prompt engineering as a core competency. This shift is driven by the realization that the quality of AI outputs hinges significantly on how prompts are structured. Several studies and industry reports highlight that well-crafted prompts can increase response relevance by over 50%, reduce errors, and enhance user satisfaction.

Prompt patterns form the backbone of effective prompt engineering. Common patterns include:

- Instruction-based prompts: Clear commands directing the AI to perform specific tasks. Example: “Generate a Python function that sorts a list.”

- Role prompting: Framing the AI as an expert or specialist to improve relevance. Example: “You are a cybersecurity analyst. Explain the latest threat landscape.”

- Chain-of-thought prompting: Encouraging the AI to reason step-by-step for complex tasks. Example: “Break down the process of solving a quadratic equation.”

- Few-shot prompting: Providing examples within the prompt to guide the output format. Example: “Translate these sentences from English to French: [examples].”

Common mistakes in prompt engineering can significantly hinder AI performance. These include:

- Vagueness: Using ambiguous language that leads to unpredictable results.

- Overloading prompts: Combining multiple unrelated tasks in a single prompt, confusing the model.

- Lack of iteration: Failing to refine prompts based on previous outputs, resulting in subpar results.

- Ignoring model limitations: Expecting models to know real-time data or private information they were not trained on.

- Poor structuring: Disorganized prompts lacking clear sections or instructions, which can cause the model to misinterpret intents.

Best practices to avoid these pitfalls involve:

- Being specific and concise: Clearly define the task, desired output format, and constraints.

- Using role-based framing: Assigning a persona or expertise to the model enhances relevance.

- Incorporating examples: Providing context or sample outputs guides the model effectively.

- Iterative refinement: Testing, analyzing outputs, and refining prompts improve results over time.

- Safeguarding against malpractices: Including defensive statements to prevent prompt injection or misuse.

Additionally, understanding how to structure prompts for coding tasks, data analysis, or creative content generation is vital. For example, when generating code, specify the programming language, function purpose, input/output formats, and constraints. For analytical tasks, outline the steps clearly, such as data preprocessing, analysis, and reporting.

First Practical Aspect: Precision in Output

One of the most immediate benefits of prompt engineering is achieving precise outputs tailored to specific needs. Developers often face challenges when AI models generate generic or irrelevant responses, especially in high-stakes domains like finance, healthcare, or legal research. By employing clear instructions, role-based prompts, and examples, developers can guide models to produce highly relevant content.

For instance, instructing the AI to act as a “legal expert specializing in intellectual property law” and asking it to “draft a non-disclosure agreement” results in more authoritative and contextually appropriate drafts. This level of precision reduces revision cycles and enhances trust in AI-generated content.

Second Practical Aspect: Managing Model Limitations

Large language models have inherent limitations, such as lack of real-time data access and understanding of private or confidential information. Prompt engineering helps mitigate these issues by explicitly defining the scope and boundaries of the AI’s knowledge.

For example, instructing the model: “Respond based on information available up to 2023 and do not speculate on future events” ensures outputs stay within expected parameters. Additionally, including disclaimers and safety instructions within prompts helps prevent hallucinations or misinformation, which is critical in sensitive applications like medical advice or financial recommendations.

Third Practical Aspect: Reducing Bias and Malpractice Risks

Prompt engineering also plays a vital role in minimizing biases and preventing malicious use. Developers can embed ethical guidelines directly into prompts—for example, “Provide a balanced view considering multiple perspectives” or “Avoid stereotypes and offensive language.” This proactive approach fosters responsible AI deployment.

Moreover, designing prompts with security in mind, such as including defensive statements to prevent prompt injection or prompt leaking, safeguards the system from adversarial attacks. Regularly testing and refining prompts reduces risks associated with unintended outputs or exploitation.