Introduction

In today’s rapidly evolving technological landscape, AI models are becoming central to countless applications—from chatbots and recommendation engines to autonomous vehicles and healthcare diagnostics. As organizations deploy these models into production environments, the need for robust, scalable, and secure APIs to serve AI capabilities has never been more critical. Building APIs for AI models performance authentication and versioning best practices tutorial is essential for ensuring seamless integration, reliable performance, and continuous improvement.

API development for AI is not just about exposing endpoints; it involves designing systems that can handle complex model interactions, authenticate users securely, and manage multiple versions of models without disrupting existing users. Companies must balance performance, security, and flexibility to keep pace with rapid model updates and diverse client requirements. Proper API design facilitates scalable deployment, simplifies maintenance, and enhances user trust—key factors for AI-driven products to succeed in competitive markets.

This tutorial dives deep into the core principles of constructing APIs optimized for AI models. It explores methods for performance authentication, best practices for version control, and strategies for scaling AI services efficiently. Whether you are an AI developer, backend engineer, or product manager, understanding these practices will help you create resilient APIs that support long-term growth and innovation. From securing sensitive data to managing model updates, this guide covers the critical aspects needed to excel in AI API development.

Explain the key term to the audience

Building APIs for AI models performance authentication and versioning involves creating structured interfaces that allow clients to access AI functionalities securely and efficiently while managing different iterations of models over time. At its core, an API (Application Programming Interface) is a set of rules and protocols that enable software applications to communicate. When integrated with AI models, APIs serve as the bridge between the complex backend models and end-user applications.

Performance authentication refers to mechanisms that verify the identity of users or systems accessing the AI services and ensure that only authorized entities can perform specific operations. This is crucial for protecting sensitive data, preventing misuse, and ensuring compliance with security standards. Common methods include OAuth, JWT (JSON Web Tokens), API keys, and role-based access control (RBAC). These tools help monitor usage, enforce limits, and safeguard computational resources, especially when dealing with high-resource AI tasks like large dataset processing or real-time inference.

Versioning in AI APIs addresses the evolution of models over time. AI models are often updated to improve accuracy, reduce bias, or adapt to new data. Without proper version control, updates risk breaking existing integrations, causing downtime, or confusing end-users. Effective versioning strategies—such as embedding version identifiers in URLs or headers—allow developers to introduce new features while maintaining backward compatibility. This ensures smooth transitions and continuous service availability, even as models evolve.

Combining these elements—performance authentication and versioning—requires thoughtful API design. It involves establishing clear protocols for managing multiple model versions, implementing secure access controls, and optimizing response times. When done correctly, it empowers organizations to deploy AI solutions confidently, scale efficiently, and adapt swiftly to changing technological landscapes.

Main body of the topic

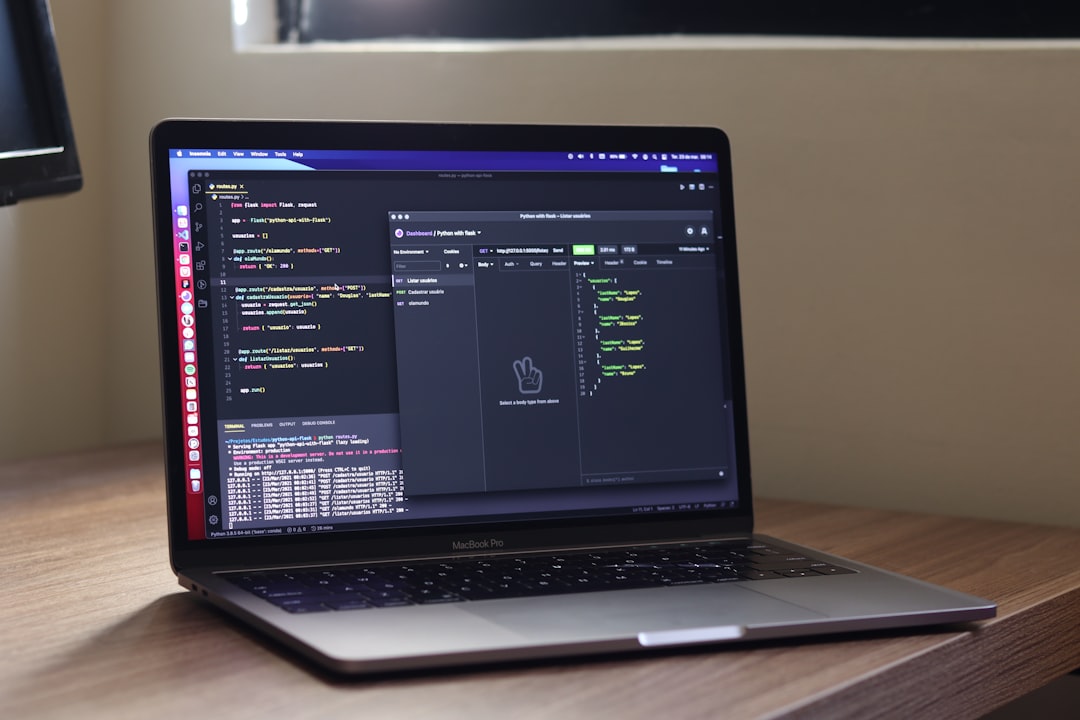

Designing APIs for AI models involves multiple interconnected considerations. Performance is paramount; AI inference often demands significant computational resources, so APIs must be optimized for low latency and high throughput. Employing techniques like caching, load balancing, and edge deployment can help meet these demands. Authentication strategies, such as OAuth 2.0 or API keys, are critical for controlling access and safeguarding data privacy, especially when APIs expose sensitive or proprietary AI models.

Versioning is another cornerstone of effective API management. As AI models are regularly retrained or fine-tuned, APIs need to support multiple versions simultaneously. Strategies like URL path versioning (`/v1/model`, `/v2/model`) or header-based version negotiation provide clarity and flexibility. Semantic versioning (e.g., v1.0, v2.1) helps communicate the nature of updates—whether they are backward-compatible improvements or breaking changes.

Standardized request and response formats enhance interoperability across diverse clients. Using JSON or Protocol Buffers ensures consistent communication, while including version information in responses promotes transparency. Automated documentation, changelogs, and migration guides are vital for developer experience, helping users adapt to new API versions with minimal disruption.

Monitoring and observability tools give insights into API usage and model performance. AI-specific metrics such as inference latency, error rates, and model drift enable proactive management. For example, integrating AI model monitoring can detect when a model’s accuracy declines, prompting retraining or version updates.

Security measures extend beyond authentication. Rate limiting prevents API overloads, while content moderation and bias detection safeguards ensure ethical AI deployment. Data privacy must also be prioritized through encryption and anonymization, maintaining compliance with regulations like GDPR or HIPAA.

In summary, building scalable and secure APIs for AI models involves layered strategies: optimizing performance, implementing robust authentication, managing multiple model versions, and maintaining rigorous security practices. These components collectively ensure the long-term success of AI-driven services.

How this topic affects or helps the reader

Understanding how to build APIs for AI models performance authentication and versioning best practices tutorial directly benefits developers, product managers, and organizations aiming to deploy AI solutions at scale. Here’s how this knowledge transforms practical AI integration:

First practical aspect: Enhancing Scalability and Reliability

By adopting best practices in API design, such as load balancing, caching, and edge deployment, developers can significantly improve the scalability of AI services. Proper authentication mechanisms prevent unauthorized access while allowing for detailed usage analytics and rate limiting. This ensures that AI models can handle high traffic volumes without degradation of service, making solutions more reliable for end-users. Moreover, supporting multiple API versions allows seamless upgrades, reducing downtime during model updates. For example, a company deploying a natural language processing API can introduce a new, more accurate model version without disrupting existing clients, thanks to strategic version management.

Second practical aspect: Securing Sensitive Data and Ensuring Compliance

AI APIs often process sensitive or proprietary data, making security paramount. Implementing OAuth, JWT, or API keys ensures only authorized users can access AI functionalities. Additionally, integrating security best practices like encryption, anonymization, and content moderation helps protect user data and prevent misuse. For organizations operating under regulatory frameworks like GDPR or HIPAA, these measures are essential to maintain compliance. Proper security not only shields data but also builds user trust and brand reputation. For instance, healthcare providers deploying AI diagnostics APIs must ensure patient data remains confidential and protected from breaches.

Third practical aspect: Streamlining Model Maintenance and Updates

AI models require ongoing monitoring, retraining, and version control to maintain performance. Using tools for model tracking, such as version repositories and automatic performance monitoring, helps teams understand why certain models perform better or worse over time. Versioning strategies enable smooth transitions between models, minimizing service disruptions. Additionally, automating retraining workflows allows organizations to adapt quickly to evolving data and user needs. For example, a recommendation engine can automatically switch to a newer model version based on performance metrics, ensuring users always receive relevant suggestions without downtime.

Conclusion

Building APIs for AI models performance authentication and versioning best practices tutorial is a vital skillset for modern AI deployment. It ensures that AI services are scalable, secure, and adaptable to ongoing changes. Effective API design not only optimizes performance but also safeguards sensitive data and simplifies model updates—factors that directly influence user experience, trust, and operational efficiency.

As AI continues to permeate diverse industries, mastering these best practices becomes increasingly important. Developers and organizations must prioritize robust authentication, thoughtful version management, and scalable infrastructure to support long-term growth. Implementing these principles allows businesses to deliver reliable, secure, and future-proof AI solutions that meet evolving user demands.

Whether you’re deploying a simple API or managing complex AI ecosystems, understanding and applying these strategies will elevate your projects, reduce risks, and accelerate innovation. Take action today by integrating these best practices into your API development workflows—your AI models and users will thank you.